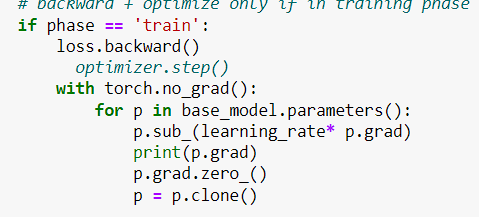

Error: checkpointing is not compatible with .grad(), please use .backward() if possible - autograd - PyTorch Forums

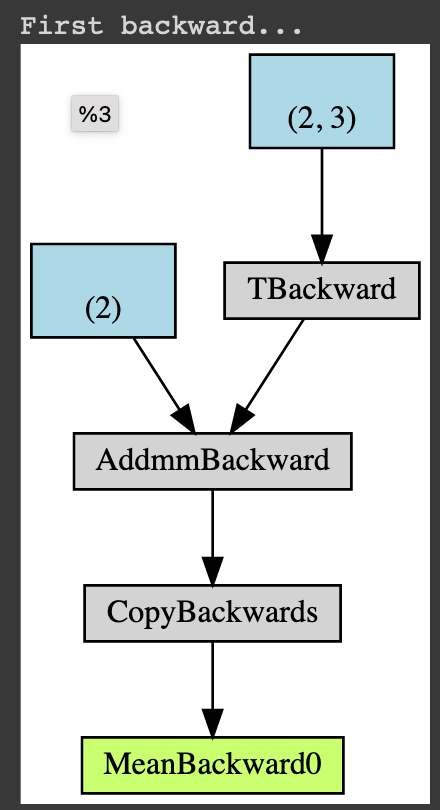

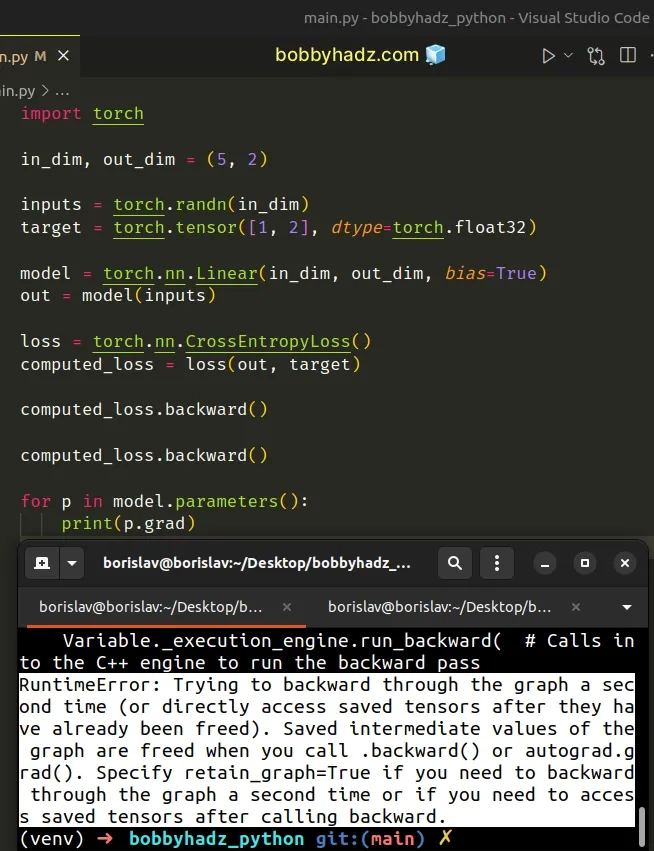

RuntimeError: Trying to backward through the graph a second time, but the buffers have already been freed. Specify retain_graph=True when calling backward the first time - #57 by Eis - PyTorch Forums

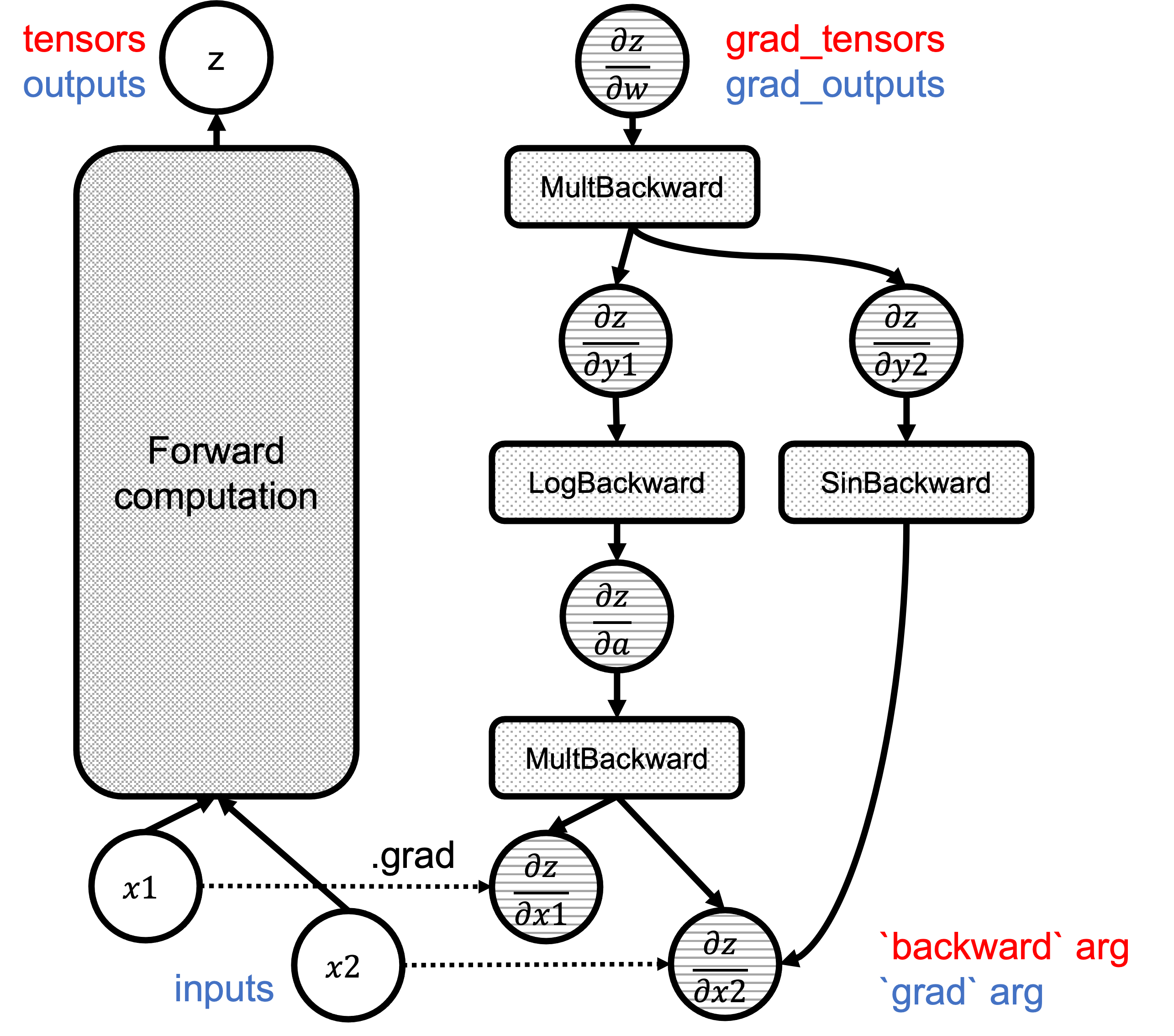

![Back-propagation Demystified [Part 2] | My Journey with Deep Learning and Computer Vision Back-propagation Demystified [Part 2] | My Journey with Deep Learning and Computer Vision](https://expoundai.files.wordpress.com/2019/09/pytorch_tensor_function.png)