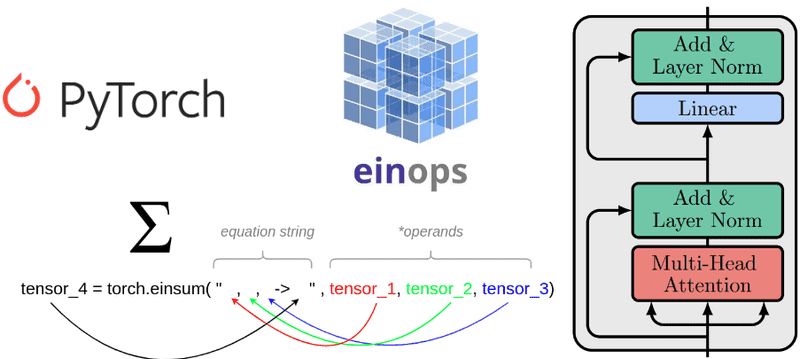

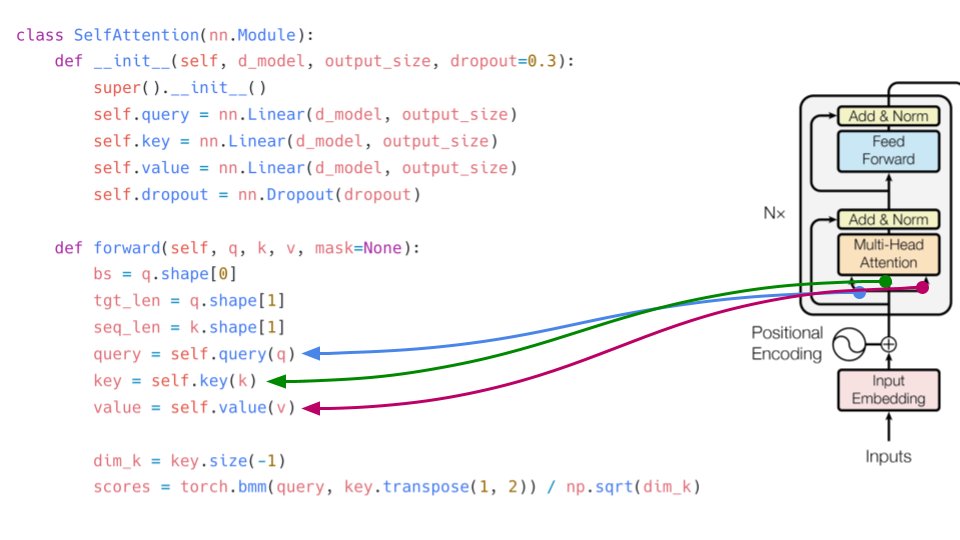

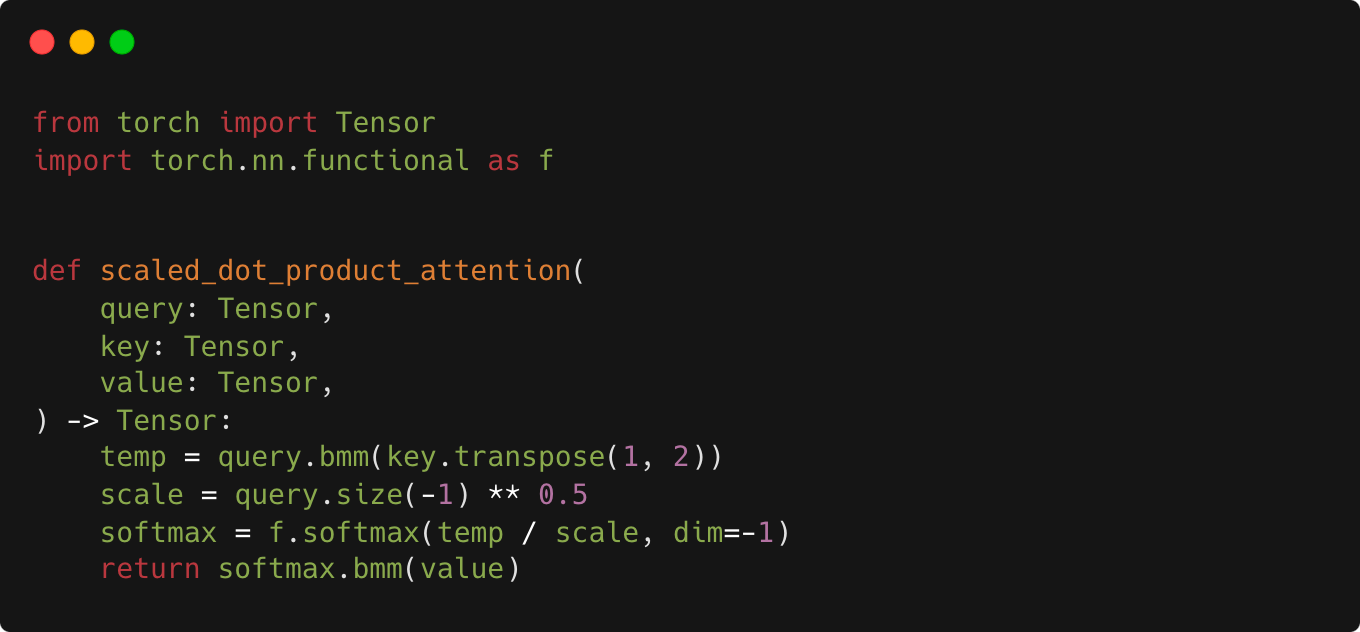

Understanding einsum for Deep learning: implement a transformer with multi-head self-attention from scratch | AI Summer

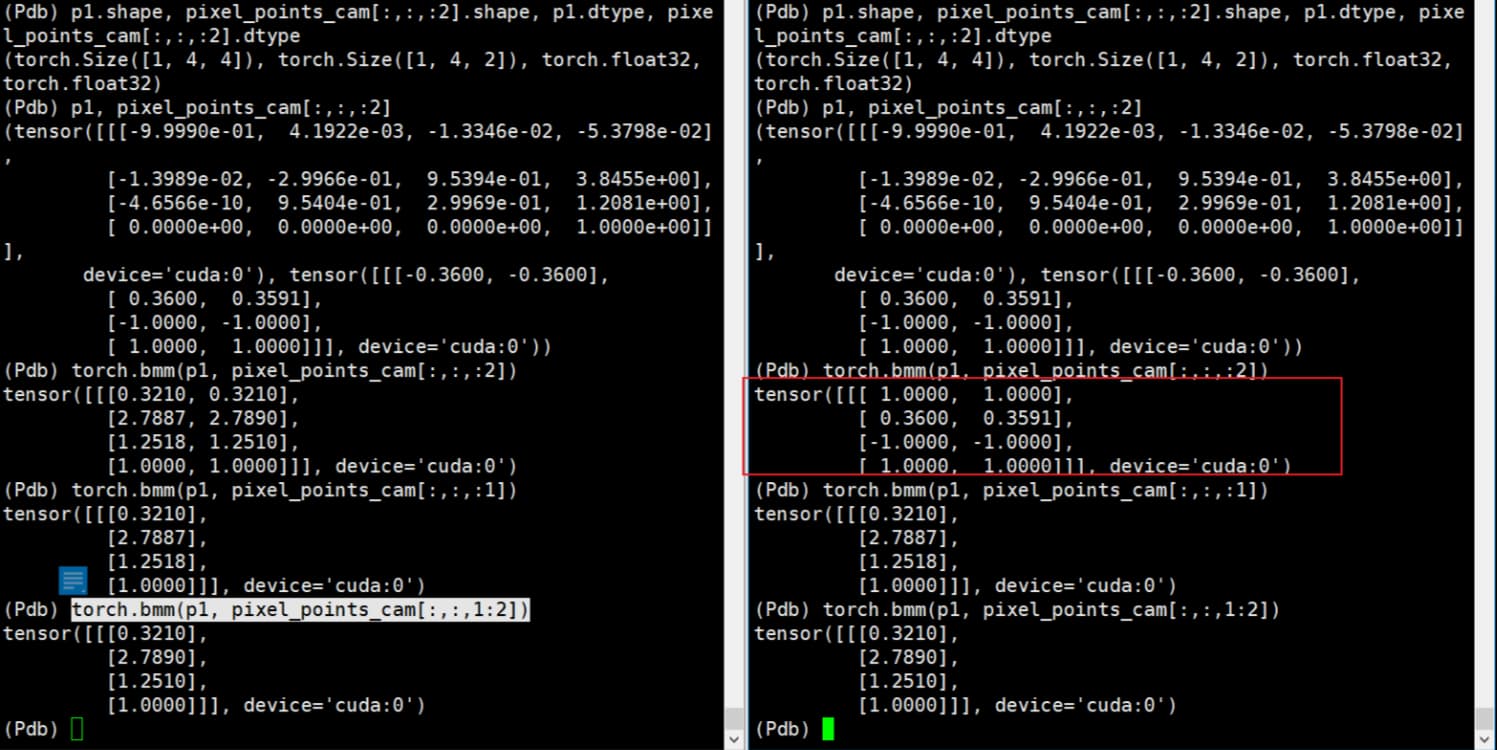

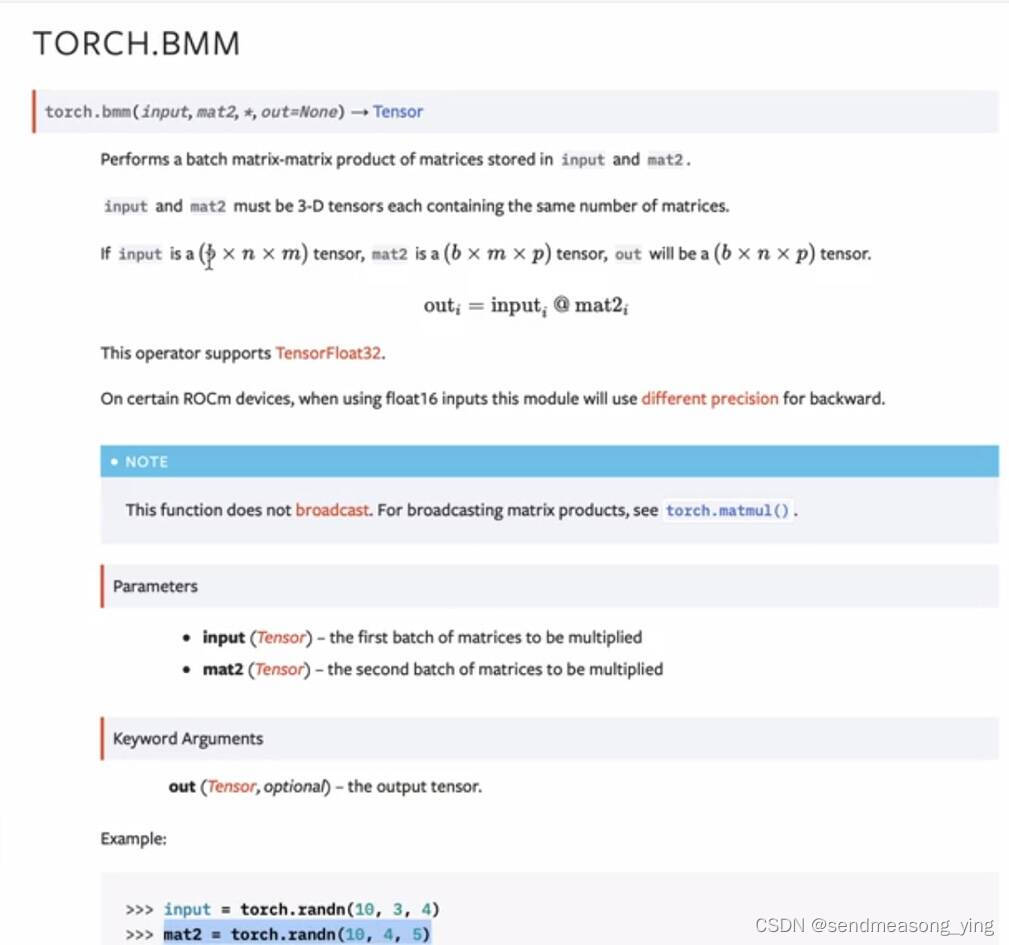

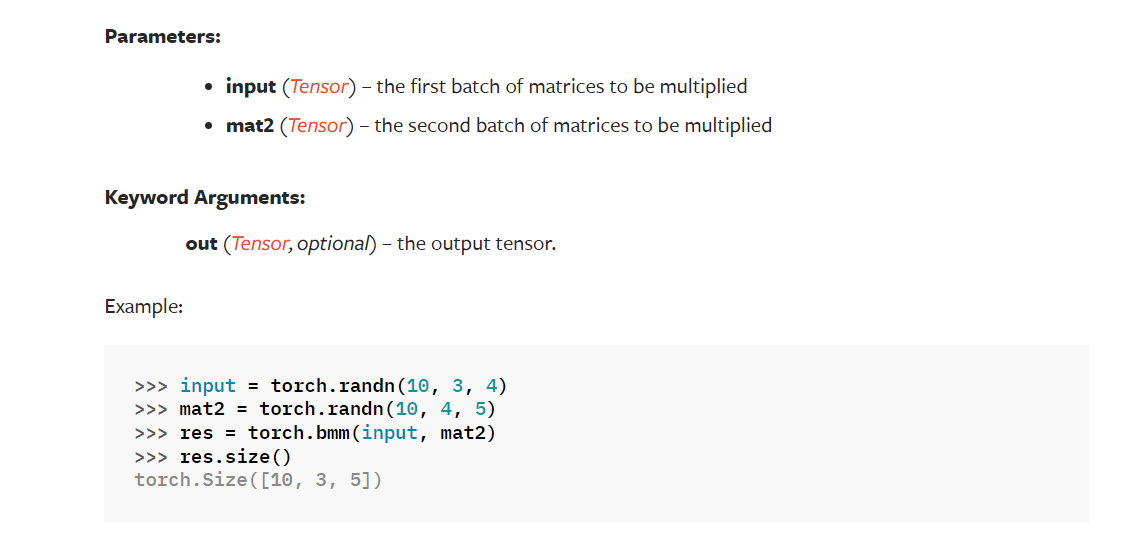

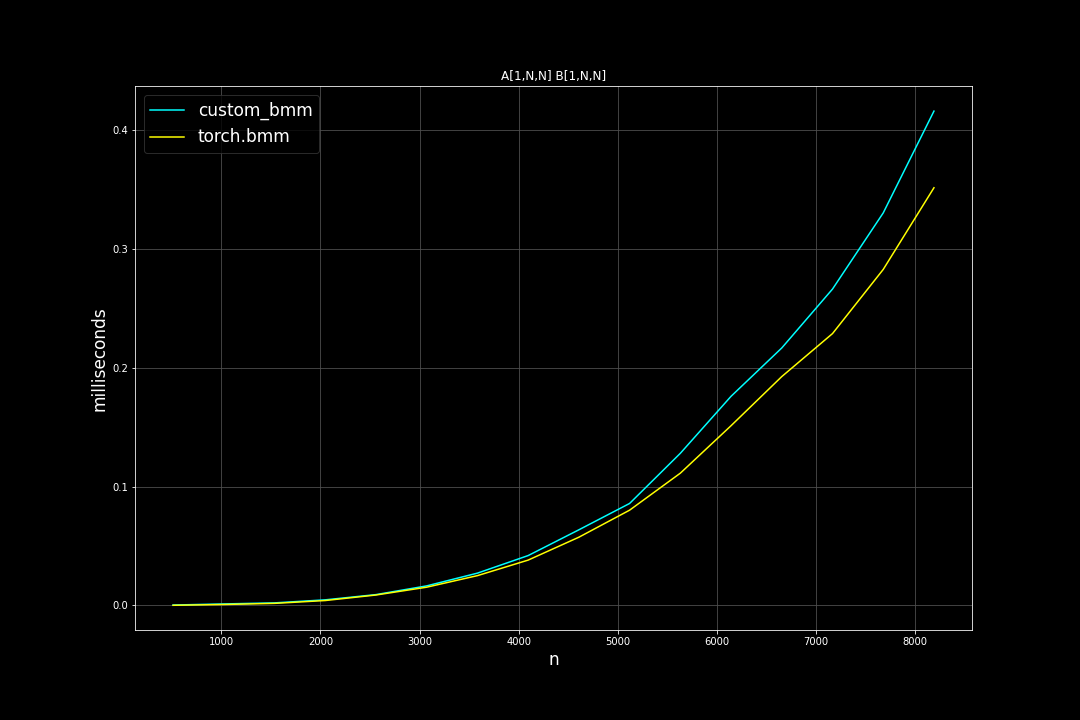

difference between torch.bmm and a batch of torch.mm become larger when matrix dimension become smaller · Issue #47154 · pytorch/pytorch · GitHub

Documentation and `torch.sparse` alias for `torch.bmm` sparse-dense · Issue #43904 · pytorch/pytorch · GitHub

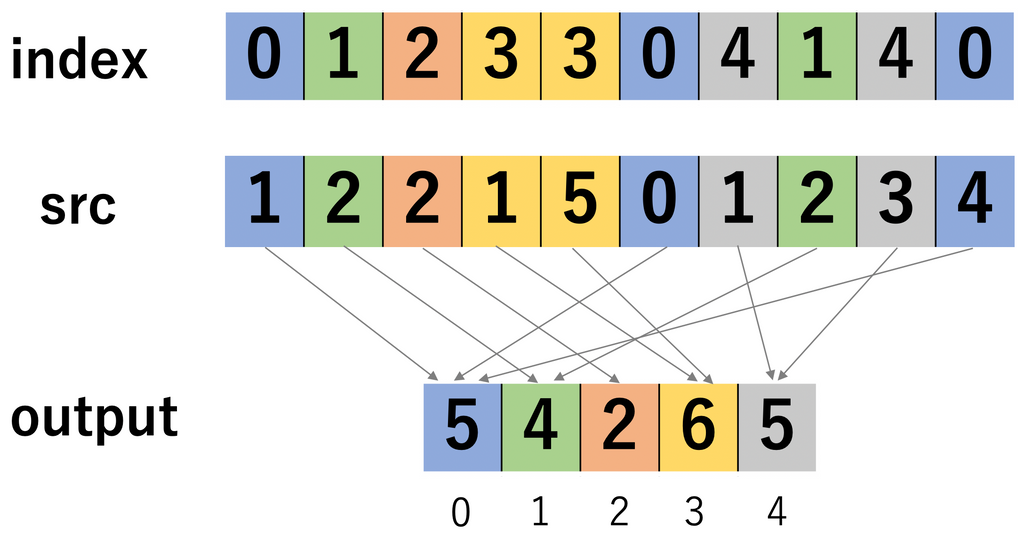

![torchのテンソル積 – 行李の底に収めたり[YuWd] torchのテンソル積 – 行李の底に収めたり[YuWd]](https://gyazo.com/e7244bb7be706ae0207cedea532c0a55.jpeg)