Saving a Pytorch neural net (torch.autograd.grad included) as a Torch Script code - jit - PyTorch Forums

RuntimeError: derivative for aten::mps_linear_backward is not implemented · Issue #92206 · pytorch/pytorch · GitHub

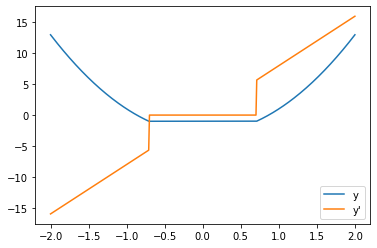

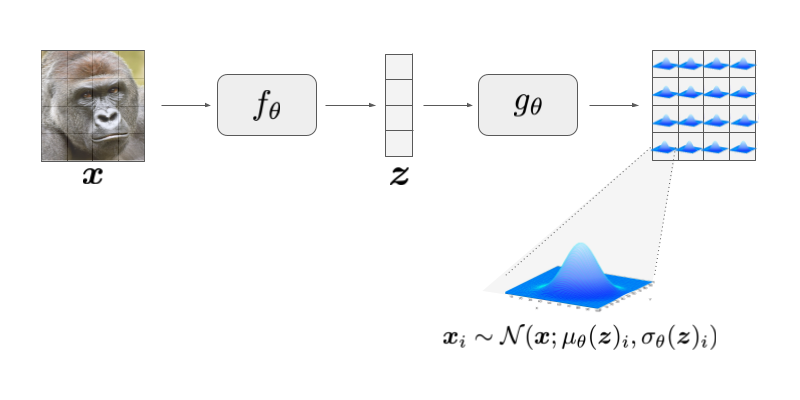

A somewhat mathematical introduction to Gaussian and Laplace autoencoders | christopher-beckham.github.io

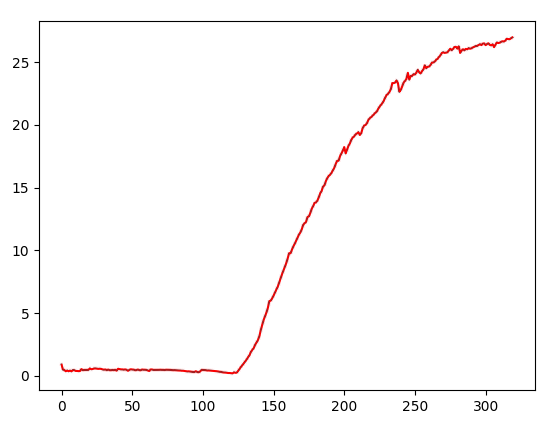

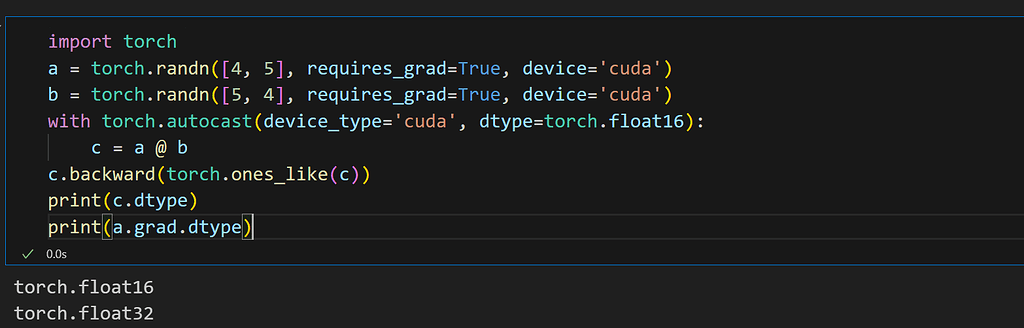

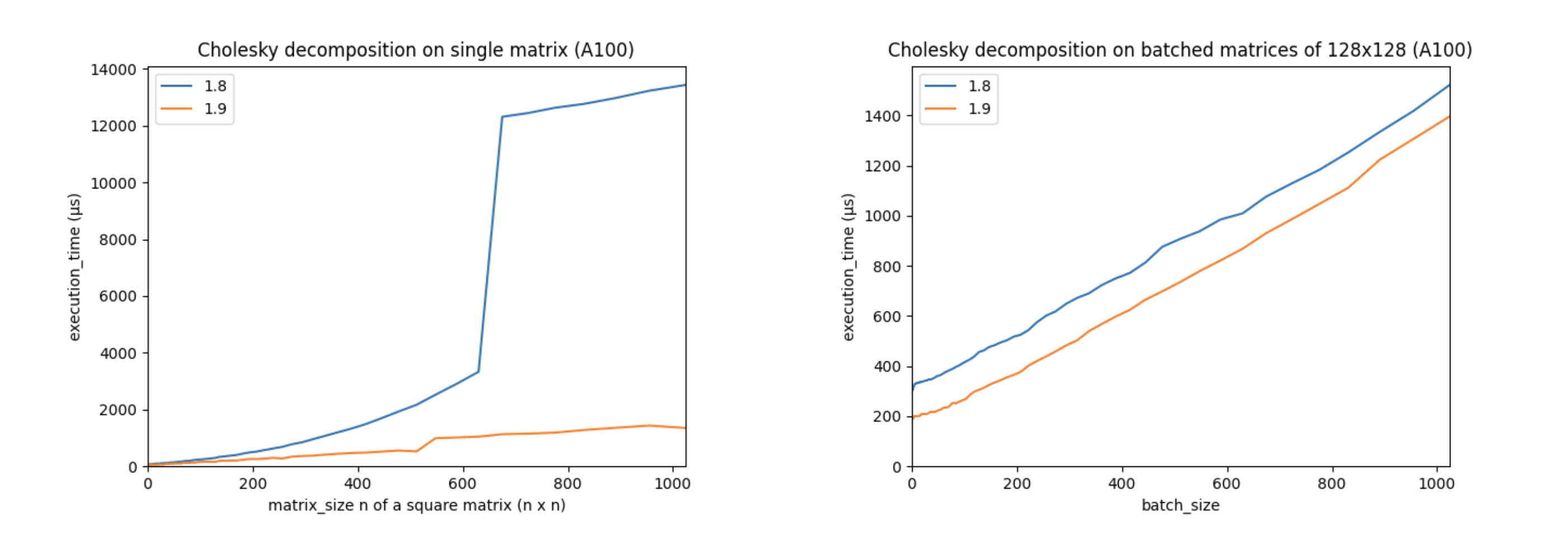

How Pytorch 2.0 Accelerates Deep Learning with Operator Fusion and CPU/GPU Code-Generation | by Shashank Prasanna | Towards Data Science

Missing grad_fn when passing a simple tensor through the reformer module. · Issue #29 · lucidrains/reformer-pytorch · GitHub

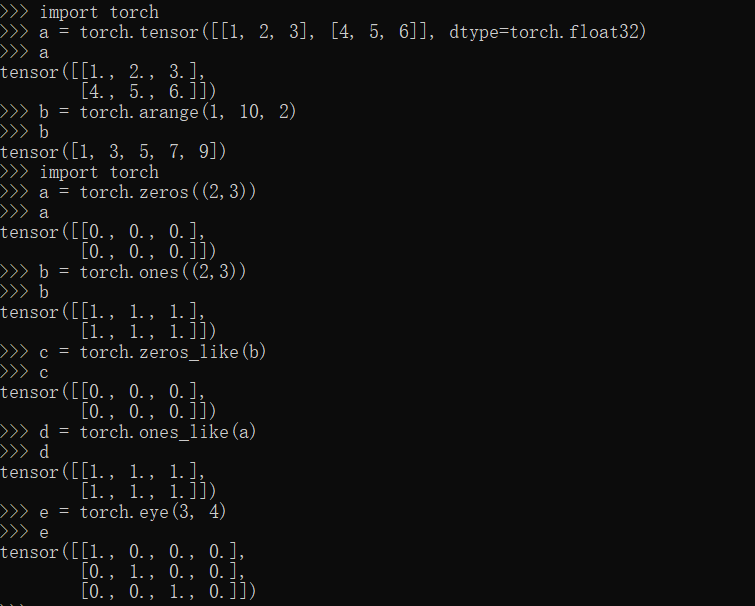

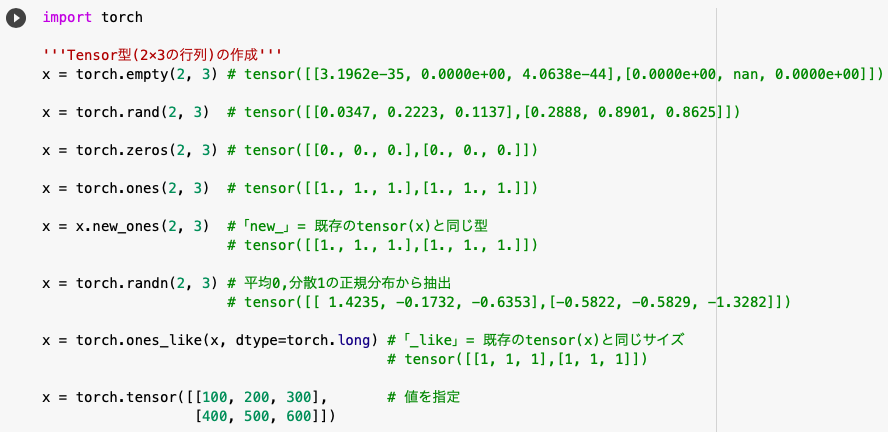

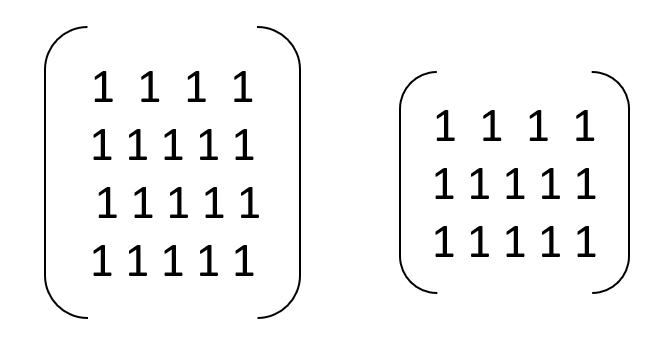

Creating Ones Tensor in PyTorch with torch.ones and torch.ones_like - MLK - Machine Learning Knowledge

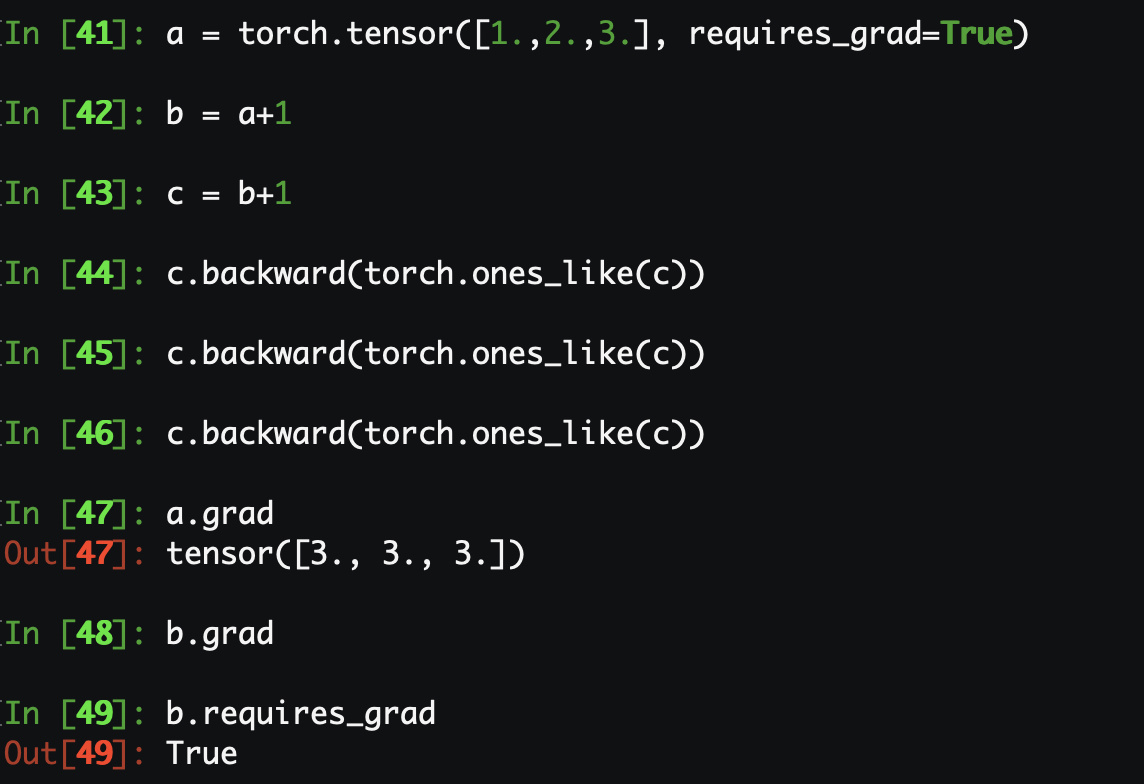

RuntimeError: Trying to backward through the graph a second time, but the buffers have already been freed. Specify retain_graph=True when calling backward the first time - #57 by Eis - PyTorch Forums

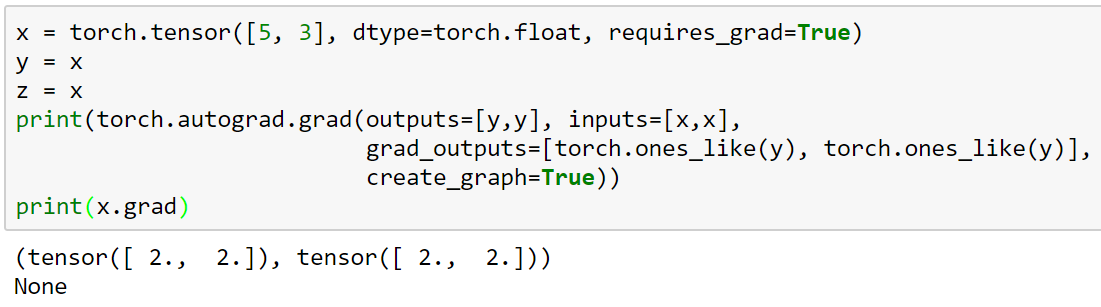

Autograd.grad accumulates gradients on sequence of Tensor making it hard to calculate Hessian matrix - autograd - PyTorch Forums